YouTube-UGC Dataset

This YouTube dataset is a sampling from thousands of User Generated Content (UGC) as uploaded to YouTube distributed under the Creative Commons license. This dataset was created in order to assist in the advancement of video compression and quality assessment research of UGC videos.

What is a UGC video clip

UGC videos are uploaded by users and creators. These videos are not always professionally curated and often suffer from perceptual artifacts. For the purpose of this dataset, we've selected original videos with specific and sometimes substantial perceptual quality issues, like blockiness, blur, banding, noise, jerkiness, and so on.

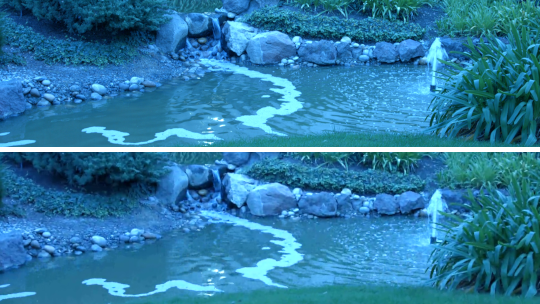

Challenges in UGC

A common assumption of much video quality and compression research is that the original video is pristine (as in the top frame), and any operation on the original (processing, compression, etc) makes it worse. Most research measures how good the resulting video is by comparing it to the original. However, such an assumption breaks down in practice as most upoads are not usually pristine (as in the bottom frame).

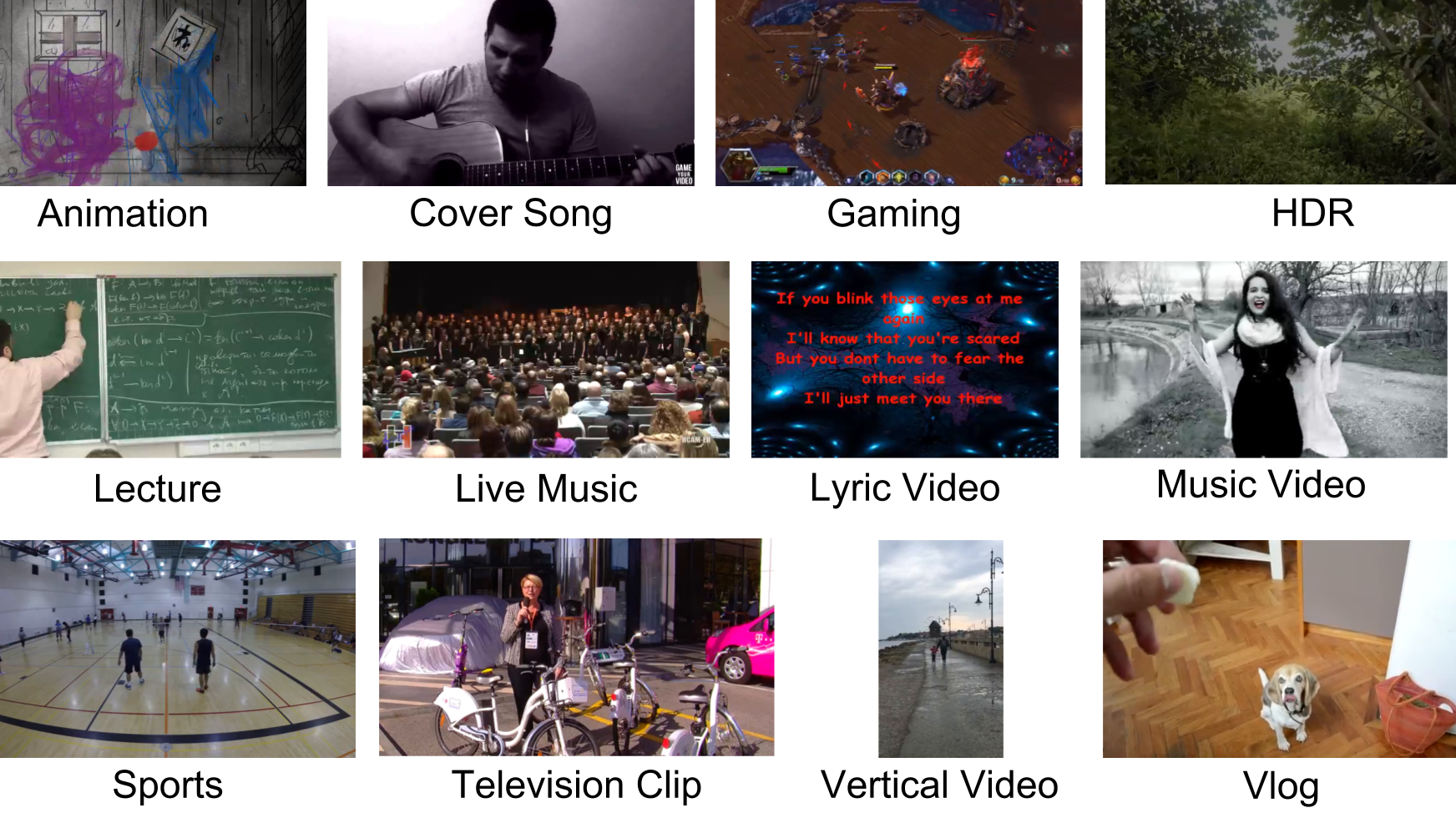

Dataset Specs

- Around 1500 video clips with a duration of 20 seconds each.

- Categories

- Animation, Cover Song, Gaming, HDR, How-To, Lecture, Live Music, Lyric Video, Music Video, News Clip, Sports, Television Clip, Vertical Video, Vlog, and VR

- Resolutions

- 360P, 480P, 720P, and 1080P for all categories (except for HDR and VR)

- 4K for HDR, Gaming, Sports, Vertical Video, Vlog, and VR genres.

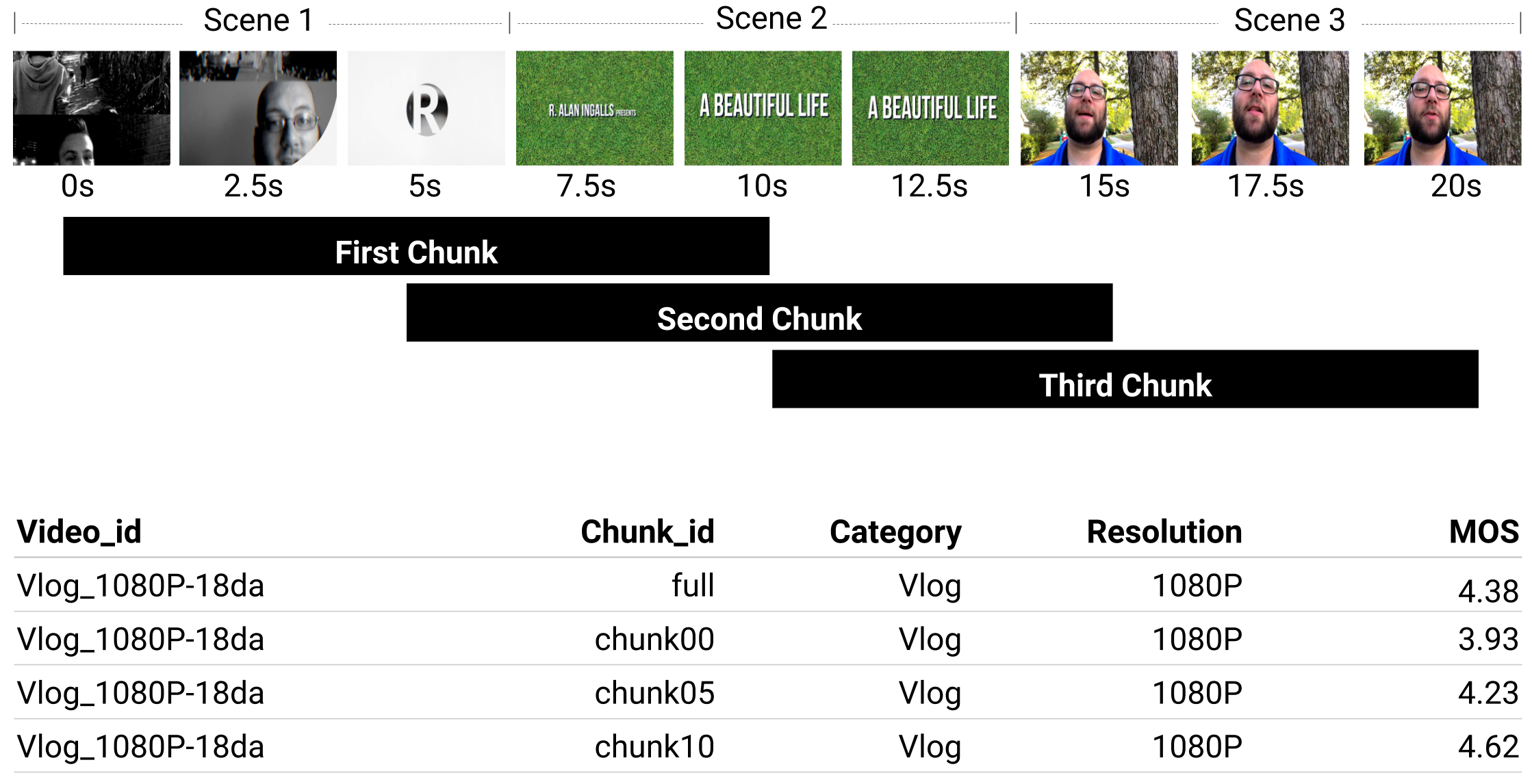

Subjective Quality Scores

- Mean Opinion Scores (MOS) available for all video clips.

- MOS for entire video clips

- All video clips were rated by 100+ subjects using crowdsourcing.

- The MOS range is [1, 5], where 1 means bad quality and 5 means excellent quality.

- MOS for chunks

- Additional MOS for three overlapping 10 second chunks (the first frame starts at 0, 5, and 10 seconds) are also provided to investigate influence of scene changes.

- DMOS for selected content categories (Gaming, Sports, and Vlog)

- Three VP9 variants: Video-On-Demand (VOD), Video-On-Demand with Lower Bitrate (VODLB), and Constant Bitrate (CBR), using the recommended VP9 settings and target bitrates.

UGC Content Labels

- 600+ labels for studying the relationship between UGC content and perceptual quality.

- Each video was assigned 12 candidate YT8M labels, and then refined through a crowd-sourcing subjective test. Every label on each video was voted by more than 10 subjects. The corresponding label confidence is defined as the actual votes divided by the total shows.